Governing AI Requires More Than Controls — It Requires Visibility

Last week, I wrote about why blocking AI is easy—but governing it is where most organizations fail.

That post focused on permissioning: what really happens the moment a user flips an AI connector from Needs approval to Always allow.

This article is about what comes next.

Because once you allow it… you need to see it.

Claude Cowork Is an Identity Problem, Not an AI Problem

Tools like Claude Cowork don’t introduce brand‑new risk.

They accelerate access to existing risk.

When an AI operates with delegated user identity, it can:

- Read and search email, Teams, and SharePoint

- Act across files and workloads at machine speed

- Execute actions the user barely understands—yet fully owns

From a security perspective, that’s not an “AI feature.”

That’s identity, access, and behavior at scale.

And scale is where most security programs break.

Detections Are Necessary—but They’re Not Enough

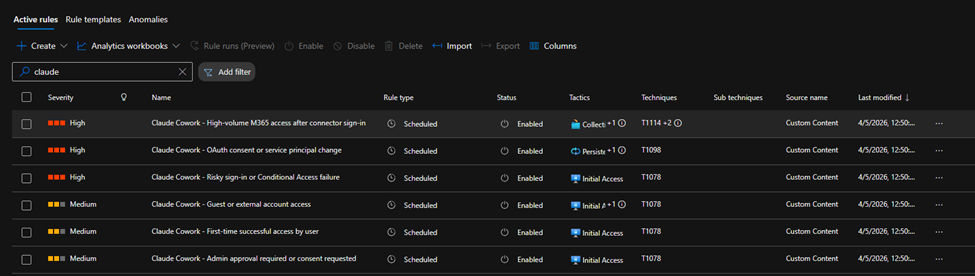

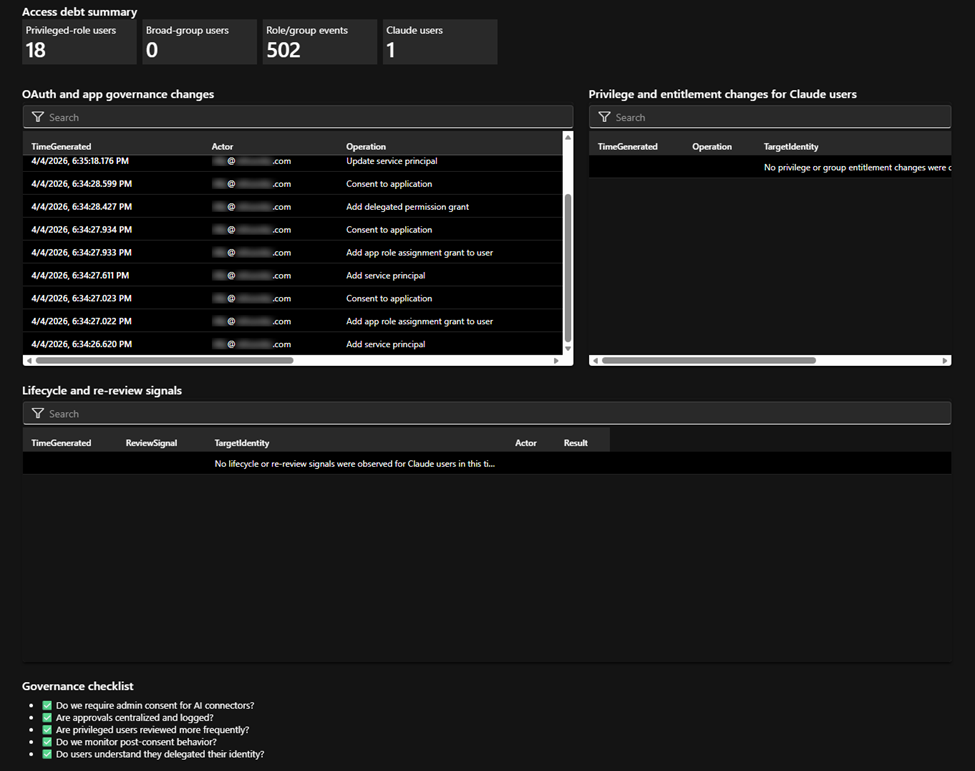

We’ve already been building custom Sentinel analytic rules to detect Cowork-style behavior:

- First-time or anomalous M365 access after connector enablement

- OAuth consent and service principal changes

- High-volume access patterns tied to AI tooling

- Risky sign-ins correlated with delegated AI usage

Those detections work.

But here’s the gap we kept seeing in real environments:

Security teams could detect the activity—but they couldn’t quickly understand the usage.

And if your SOC can’t explain what’s happening, governance turns into guesswork.

From Alerts to Operator Clarity

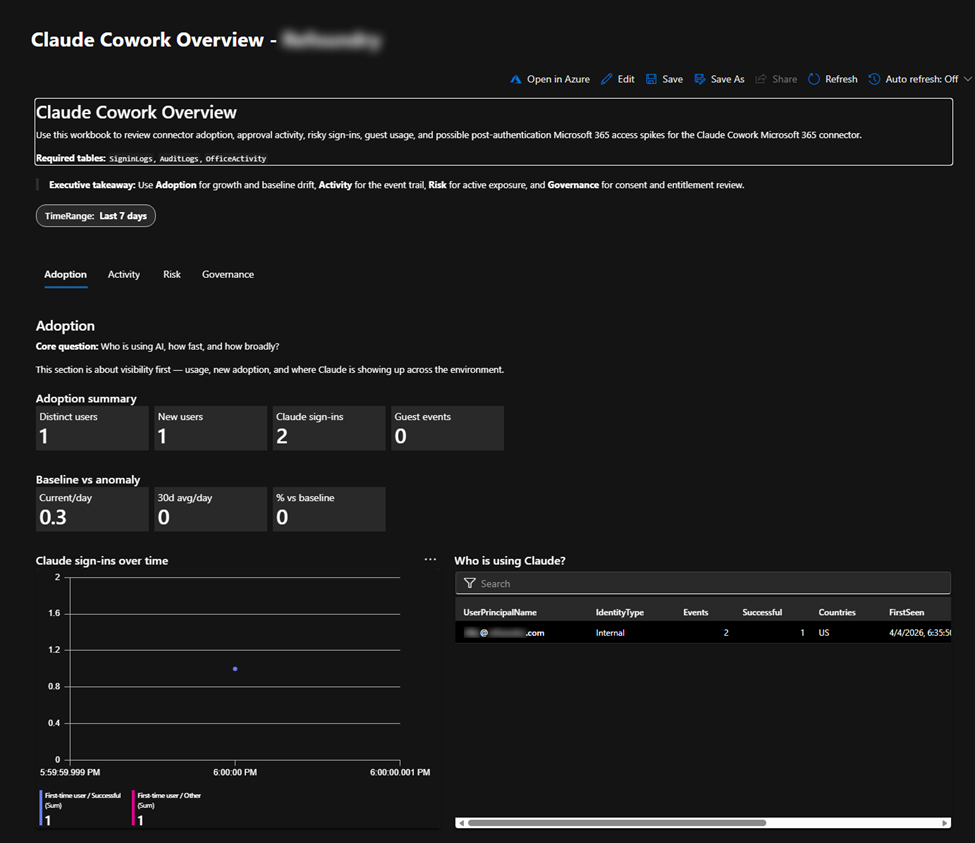

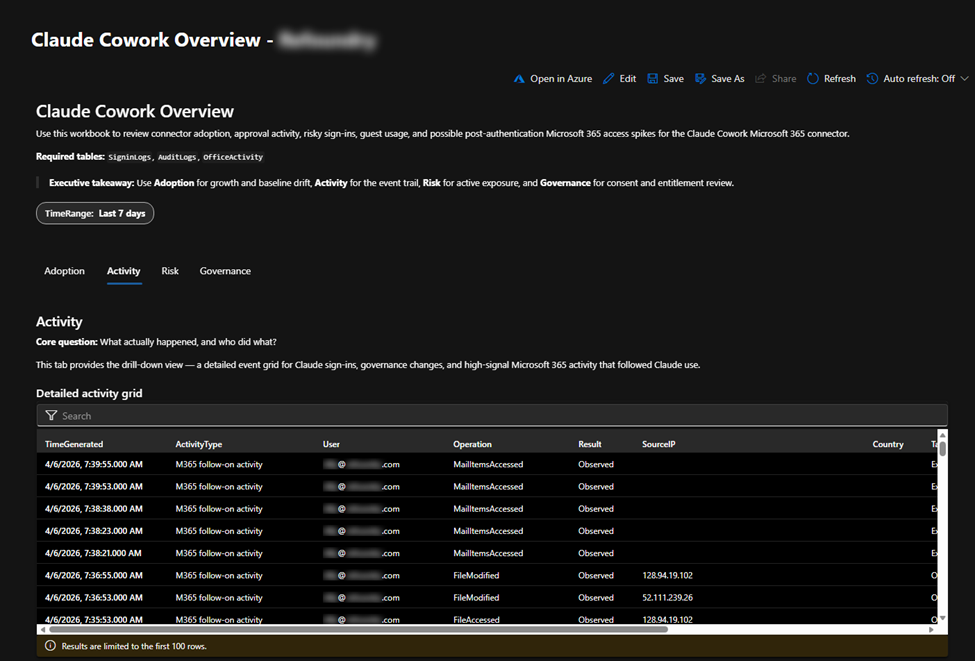

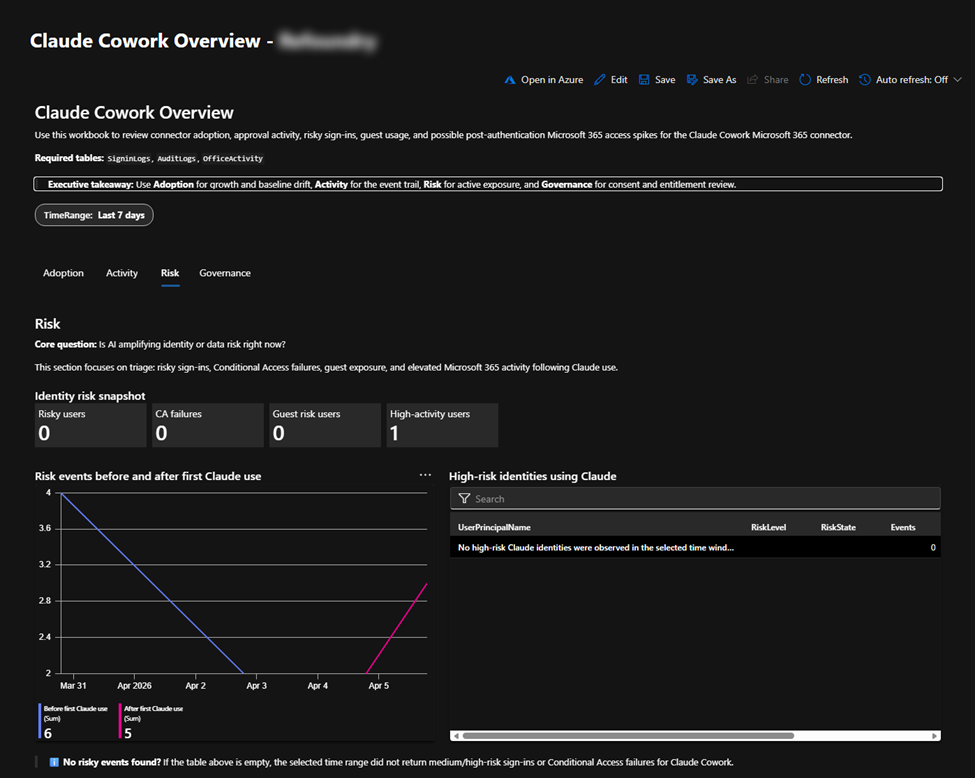

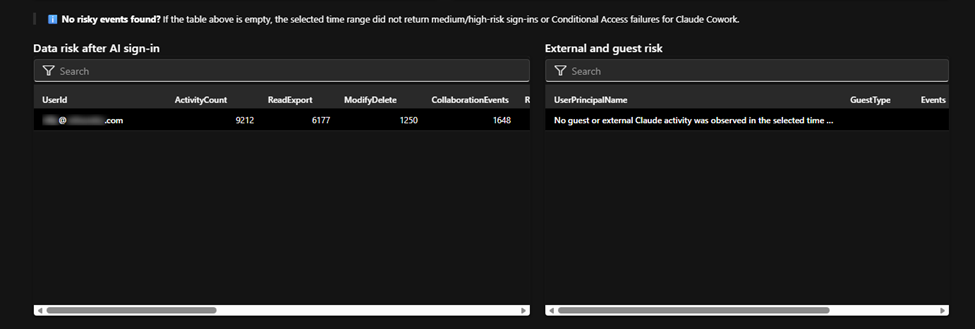

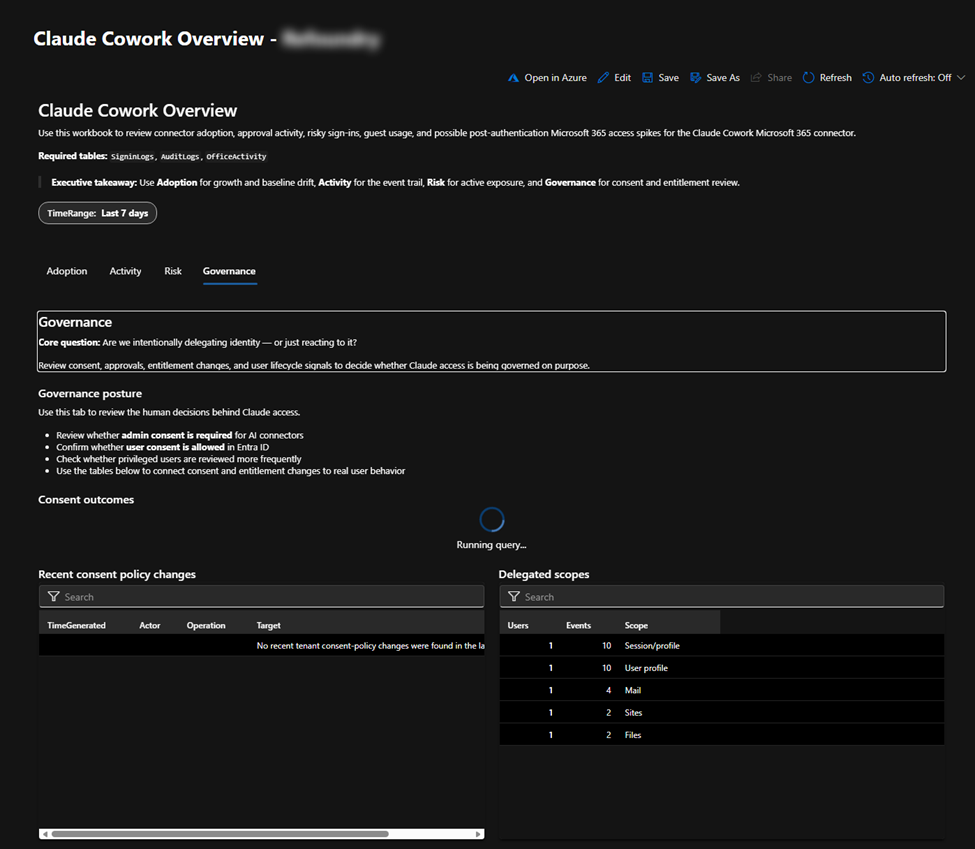

That’s why we built a Defender XDR workbook backed by Sentinel—specifically to visualize Cowork usage in the environment.

Not just alerts.

Not just logs.

Context.

The workbook answers the questions security leaders actually ask:

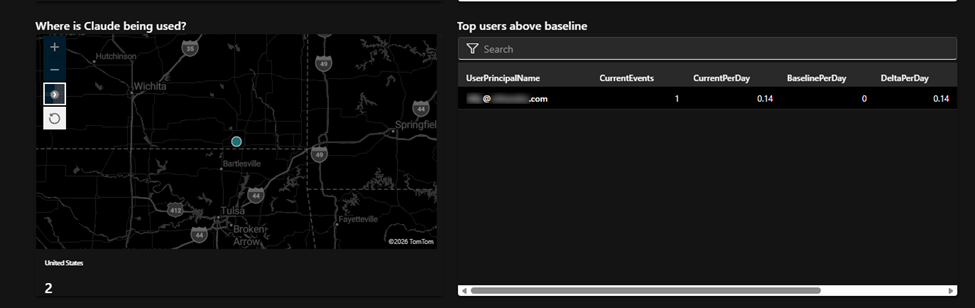

- Where is Cowork being used today?

- Which users are delegating access—and to what?

- What workloads are being touched, and how frequently?

- What does “normal” look like before it becomes risky?

This isn’t a marketing dashboard.

It’s an operator-focused view that turns raw detections into something you can reason about, explain to leadership, and act on with confidence.

Why This Matters Now

AI governance fails when organizations treat AI as something separate from their security foundation.

It isn’t.

Cowork lives squarely in your identity and control plane—which means Defender XDR and Sentinel are exactly where this visibility belongs.

We built this solution the same way we build everything at Refoundry:

- Code-first: repeatable, versioned, deployable

- Automation-heavy: minimal operational drag

- Standards-based: native Defender XDR + Sentinel, no bolt-ons

- Experience-driven: informed by what breaks at scale—not what demos well

Most importantly, it reduces time to value.

Because if your SOC can’t quickly see how AI is being used, you’re already behind the risk curve.

The Real Takeaway

Blocking AI doesn’t work.

Allowing it blindly is worse.

The organizations that get this right will be the ones that:

- Treat AI tooling as delegated identity

- Detect and visualize behavior

- Govern usage without killing momentum

AI didn’t change the rules.

It just removed the margin for error.

If you’re enabling Cowork—or anything like it—make sure your visibility keeps up.

Send Us a Message

"*" indicates required fields