Blocking AI is easy. Governing it is where most organizations fail.

Most organizations are not ready for what “Always allow” actually means in tools like Claude Cowork.

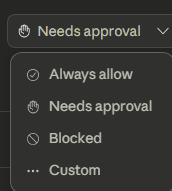

By default, it’s set to Needs approval. That’s intentional.

But the moment a user flips that to Always allow, they’ve effectively delegated their identity.

Not just access… authority.

Now you have:

- AI operating with user-level permissions

- Access to email, SharePoint, Teams, files

- The ability to take action at scale

- And zero real understanding from the user of what could go wrong

Let’s be clear—this is not an argument to block AI tools.

That approach doesn’t work. Users will route around it. The business will push for it anyway.

The answer is responsible adoption.

Understanding:

- What’s being granted

- What actions are possible

- Where controls need to exist

- And how to monitor behavior after the fact

This isn’t hypothetical.

This is:

- Bulk data modification

- Accidental deletion

- Oversharing sensitive content

- Or actions executed based on bad prompts or hallucinated reasoning

And the most dangerous part?

👉 The user trusts it.

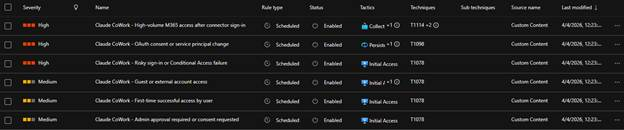

We’re already seeing this pattern emerge, which is why our MXDR (Defender XDR + Sentinel) team is proactively deploying detections across our customers to identify:

- High-volume M365 access post connector enablement

- OAuth consent / service principal changes

- Risky sign-ins tied to AI tool usage

- First-time or anomalous access patterns

Because this is not just a “new tool” problem.

This is an identity + control plane problem.

If you’re enabling tools like Claude Cowork, you need to be asking:

- Do we have app consent policies enforced?

- Are approvals centralized and governed?

- Do we understand what permissions are being granted?

- Are we monitoring post-consent behavior?

- Do users understand they are delegating their identity?

AI didn’t introduce new risk. It accelerated access to existing risk.

And if your identity foundation isn’t ready… your AI strategy isn’t either.

Send Us a Message

"*" indicates required fields