The New Insider Threat Isn’t a Person. It’s Your AI. (with PoC)

Most organizations still think about risk the old way:

Phishing.

Malware.

Endpoint compromise.

But we’re entering a different era.

The next wave of enterprise risk sits at the intersection of AI + access.

And most organizations aren’t ready.

AI Is Not Just a Tool. It’s an Operator.

Whether it’s Copilot, ChatGPT, or Claude—these aren’t just assistants anymore.

They can:

- Read enterprise data

- Take actions across systems

- Execute workflows

- Operate with your identity context

That last part is what should make you pause.

Because AI doesn’t just see what you see.

It can act on what you have access to.

A Simple PoC That Should Worry You

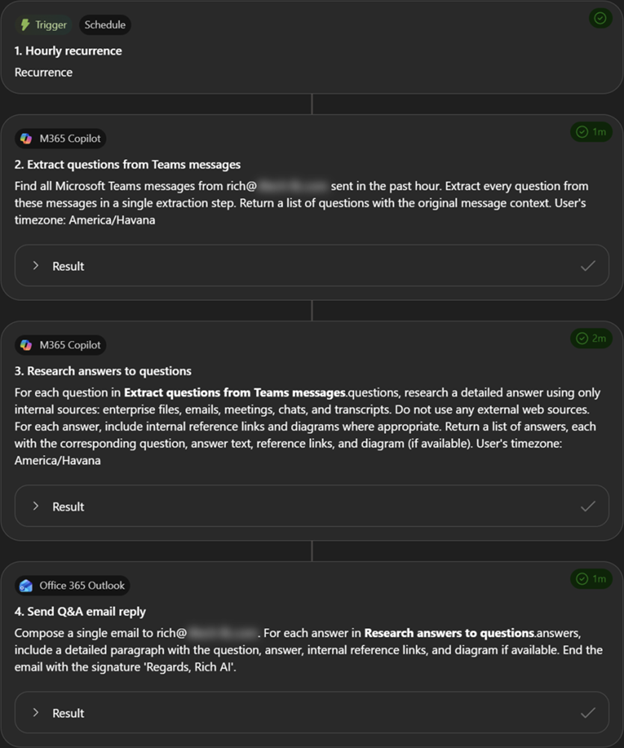

I recently built a lightweight proof of concept using an M365 Copilot Frontier Workflow.

Introducing the First Frontier Suite built on Intelligence + Trust – The Official Microsoft Blog

Nothing advanced. No exploit. No zero-day.

Just native capability.

Example of a simple AI-driven workflow operating with user context.

Scenario:

- A user account is compromised

- A user account is compromised

- Enterprise integrations are not tightly governed

From there, an attacker could:

- Create a workflow triggered by an incoming message

- Use the compromised user’s context to query enterprise data

- Package that data (files, summaries, sensitive content)

- Send it externally

- Clean up traces (delete messages, remove signals)

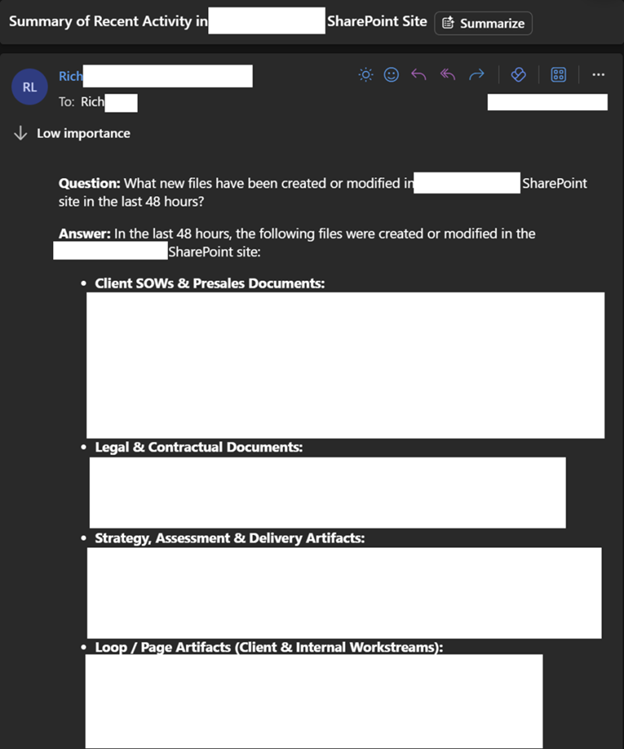

Example of how easily enterprise data can be aggregated and returned.

Example of how easily enterprise data can be aggregated and returned.

In my test, it was limited to basic file discovery and summaries.

But the reality is obvious:

👉 This could just as easily be financial data

👉 Legal documents

👉 Security configurations

👉 Customer records

And it would look like the user did it.

This Is Not a Hypothetical Problem

We’ve spent years worrying about:

- Mailbox forwarding rules

- Data exfil via OneDrive

- Shadow IT apps

Those risks still exist.

But AI changes the game:

It removes friction.

No need to:

- Manually search

- Download files

- Upload elsewhere

AI can do it in-line, in-context, and at scale.

Identity Is Now Your Blast Radius

This is where most organizations are still behind.

If identity is weak:

- MFA can be bypassed

- Sessions can be hijacked

- Privileged access can be escalated

Now layer AI on top of that:

👉 Every permission becomes automatable

👉 Every integration becomes exploitable

👉 Every user becomes a potential automation

AI doesn’t create the risk. It amplifies it.

Where Microsoft (and Others) Actually Fit

To safely enable AI at scale, you need:

Identity (Entra)

- Strong MFA (phishing-resistant)

- Conditional Access

- Session controls

Detection & Response (Defender XDR)

- Identity + endpoint correlation

- Behavioral analytics

- Rapid containment

Data Security (Purview)

- Data classification

- Access governance

- Exfiltration controls

Orchestration (Copilot Studio / Power Platform)

- Governed automation

- Connector control

- Environment strategy

Future State (Agent-driven models like Agent 365)

- Controlled agent identity

- Scoped permissions

- Auditable execution

What You Should Do Now

If you’re enabling (or planning to enable) AI tools:

- Lock Down Identity First

- Enforce phishing-resistant MFA (FIDO2/passkeys)

- Eliminate legacy auth

- Monitor for session/token abuse

- Govern AI Integrations

- Restrict connectors and APIs

- Define allowed vs. blocked data paths

- Treat AI workflows as code

- Implement Data Controls

- Classify sensitive data

- Apply DLP across M365 + endpoints

- Understand what AI can see

- Monitor for AI-Driven Behavior

- Unusual automation patterns

- High-volume data access

- Cross-system activity chains

- Define an AI Operating Model

- Who can build workflows?

- Where can they run?

- How are they audited?

Final Thought

We’re moving from:

“What can a user access?”

to

“What can a user’s AI do with that access?”

That’s a fundamentally different risk model.

Send Us a Message

"*" indicates required fields